Open AI, the creator of the extremely widespread AI chatbot ChatGPT, has formally shut down the software it had developed for detecting content material created by AI and never people. ‘AI Classifier’ has been scrapped simply six months after its launch – apparently on account of a ‘low charge of accuracy’, says OpenAI in a weblog put up.

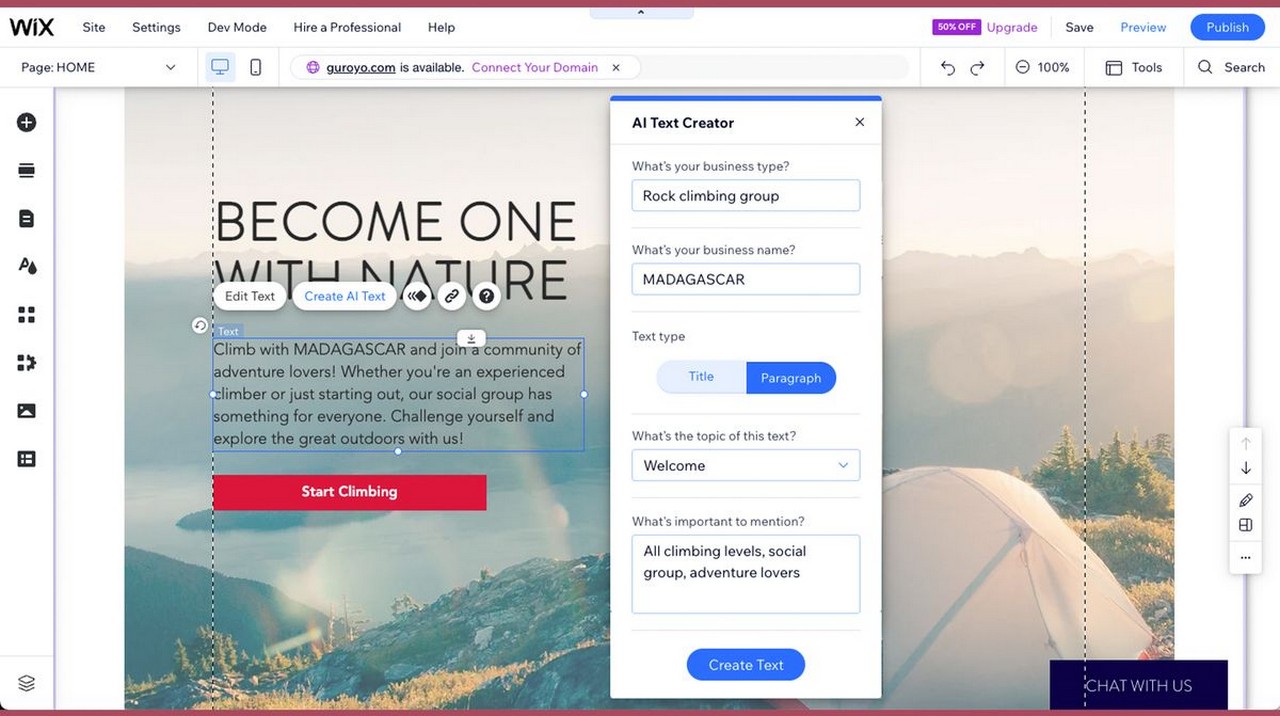

ChatGPT has exploded in recognition this 12 months, worming its approach into each facet of our digital lives, with a slew of rival providers and copycats. After all, the flood of AI-generated content material does deliver up considerations from a number of teams surrounding inaccurate, inhuman content material pervading our social media and newsfeeds.

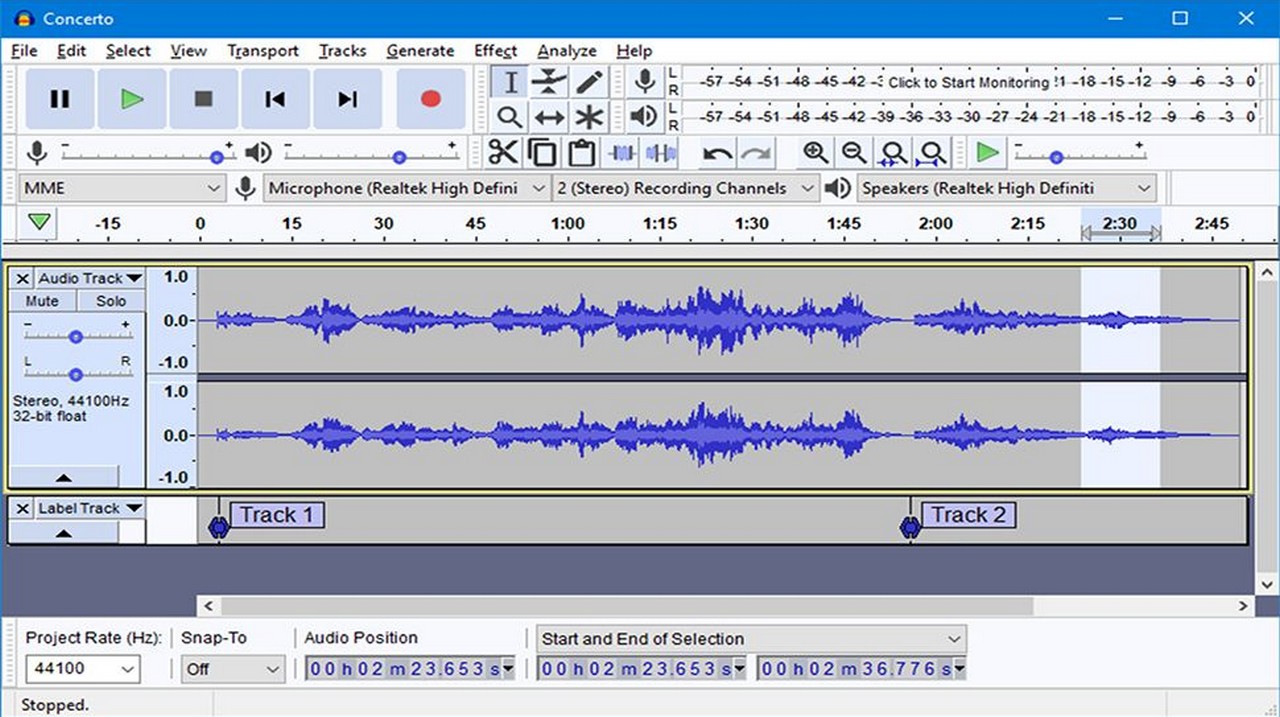

Educators particularly are troubled by the other ways ChatGPT has been used to write down essays and assignments which can be handed off as unique work. OpenAI’s classifier software was designed to handle these fears not simply inside schooling however wider spheres like company workspaces, medical fields, and coding-intensive careers. The thought behind the software was that it ought to be capable of decide whether or not a chunk of textual content was written by a human or an AI chatbot, as a way to fight misinformation

Plagiarism detection service Turnitin, typically utilized by universities, lately built-in an ‘AI Detection Software’ that has demonstrated a really outstanding fault of being incorrect on both aspect. College students and school have gone to Reddit to protest the wrong outcomes, with college students stating their very own unique work is being flagged as AI-generated content material, and school complaining about AI work passing by way of these detectors unflagged.

Turnitin’s “AI Detection Software” strikes (incorrect) once more from r/ChatGPT

It’s an extremely troubling thought: the concept that the makers of ChatGPT can now not differentiate between what’s a product of their very own software and what’s not. If OpenAI can’t inform the distinction, then what likelihood do we’ve got? Is that this the start of a misinformation flood, wherein nobody will ever make certain if what they learn on-line is true? I don’t prefer to doomsay, however it’s definitely worrying.

Get every day perception, inspiration and offers in your inbox

Get the most well liked offers obtainable in your inbox plus information, opinions, opinion, evaluation and extra from the TechRadar staff.